Developer Experience (DX) 101: How to evaluate it via diary study

Developer experience (DX) is the overall user experience (UX) developers have while engaged with a dev-related product, e.g., programming language, SDK, API, framework, library, docs, code examples etc. Having a great DX means that your users are more productive, content, and ultimately they are advocates of your product: all the reasons to make sure that you have an awesome DX!

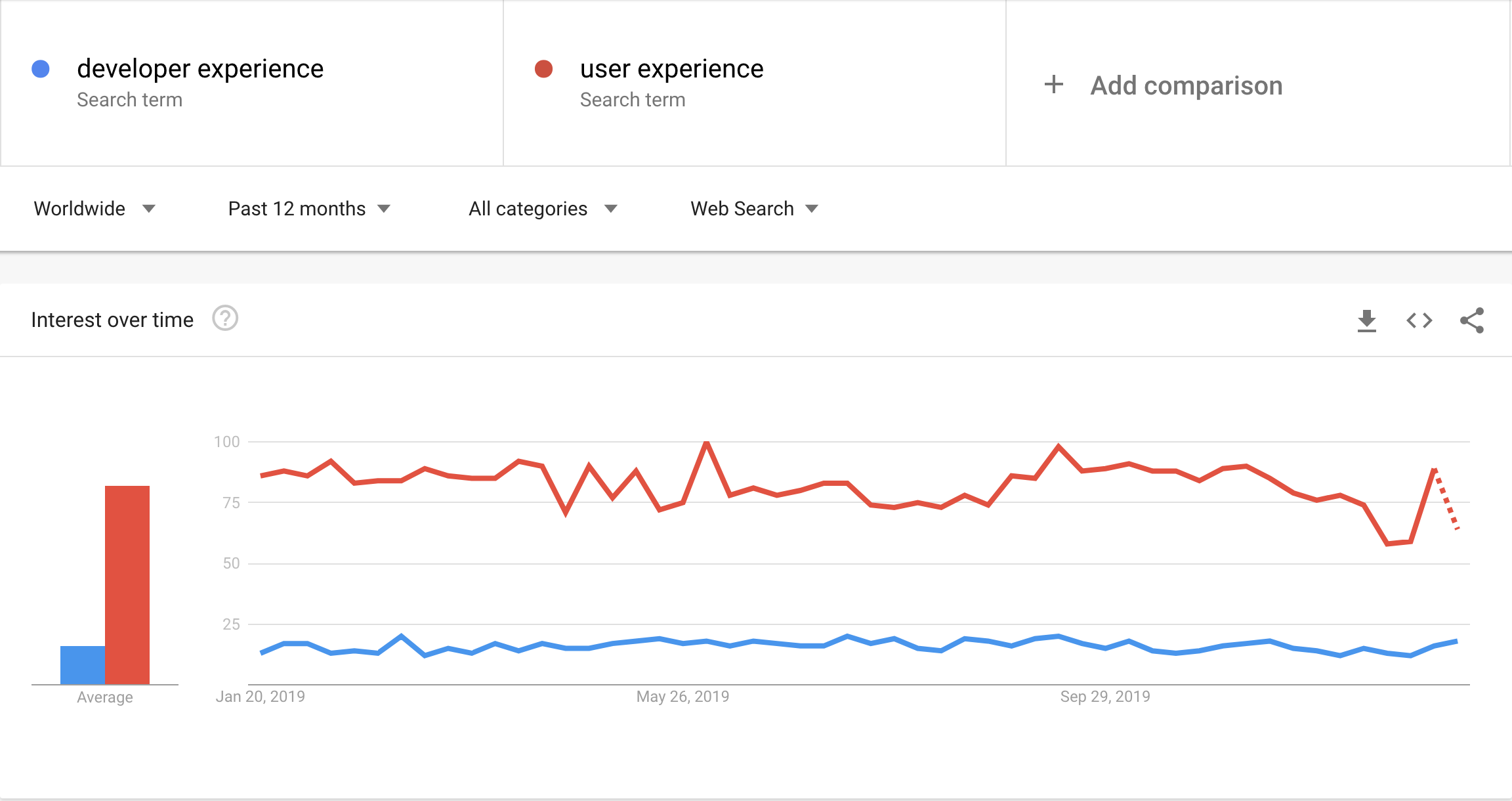

Developer experience is an important topic given that there are 370 languages used on GitHub [12] alone, 22 000 APIs registered at ProgrammableWeb [13], and somewhere between 21 000 000 [14] and 23 000 000 developers in the world [15]. This is also reflected in Google trends where we can see that it receives a fair amount of attention (1:4) when compared to its root discipline - user experience.

Unfortunately, developer experience is an underserved topic as there’s only a handful of articles that provide general guidelines and opinions of what makes a good DX [1, 2, 3, 4, 5, 6, 7, 8, 9, 10] → this is almost the entire list that can be found out there. Also, there are no practical guides that write about how to evaluate DX and what steps need to be taken in order to do it.

Because we want to bring a best-in-class DX to distributed app development we have started systematic assessment of Daml SDK’s developer experience. Below you can find our step-by-step guide on how to conduct a diary study aimed at evaluating developers’ first contact with a dev-related product (Daml SDK).

Evaluating developer experience via a diary study

A diary study [16] is a great method for evaluating developers experience with a dev-related product as:

- It spans more than a single coding session

- It allows developers to move between different learning material

- It allows them to fit the work in their schedule

- It is the closest thing to learning coding as in the real world

As with any UX study there are three phases to making it happen: planning and preparing, executing, and analysing.

Preparation and planning

We start with a doc summarising all the instructions and tasks that study participants are asked to perform. This doc (e.g., Google Doc) is then shared with the participants and it includes

First page summary of the study timeline, high-level overview of the tasks, instructions whom and how to contact regarding study questions. Having an open and clearly defined communication channel during the study is crucial as there are always things that need to be cleared up once the study starts.

Detailed task(s) description that needs to be clearly specified, including what is in and what is out of scope. For example, if the task is to develop a task tracking application using your SDK/ API/ framework this needs to be broken down into items that need to be covered and the ones that don’t:

- Using Daml SDK develop a task tracking application

- Each task has an issuer and an assignee.

- The issuer is the person who creates the task and suggests it to an assignee.

- The assignee can 1) accept or 2) reject the task.

- If the task is accepted it can then be marked by the assignee as completed. Only accepted tasks can be marked as completed

- If the task is rejected nothing else can be done with it

- Storing tasks permanently in a DB or any other storage is NOT part of this assignment

As part of the task(s) provide code snippets necessary for the task(s) that could be potential time-waste if devs needed to write/google them on their own. For the above example we could provide snippets for logging in users as they could do it with the SDK/ API/ framework they are familiar with.

In the process of writing the task descriptions you will have to optimize the tasks to accommodate the study duration. Study duration is a combination of your budget, team size, and the task(s). We were able to get great results with a 5 days study asking the participants to invest one hour daily. It also makes it easier to provide help in between and therefore avoids users getting stuck.

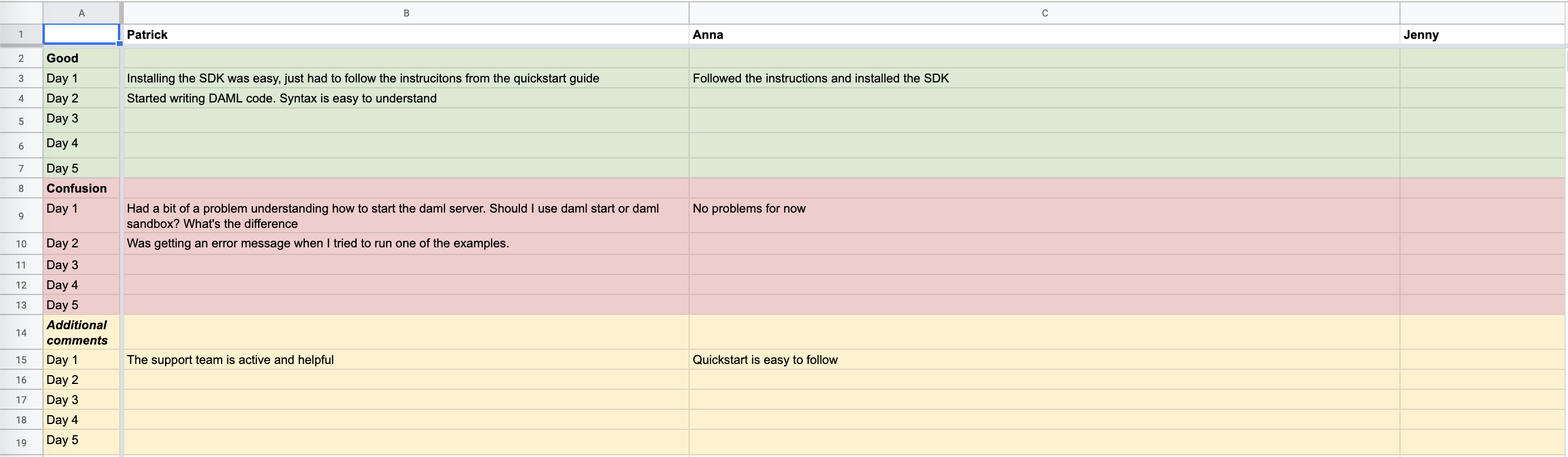

As the last part of the document you’ll prepare daily comment boxes/areas where participants can write what was good/bad while engaged with the task(s). Make sure to write enough pointers for what they should be writing about, e.g., for what was good

- IDE support, documentation, language syntax, clear error messages, anything else that comes to mind

- Why was it good from your perspective?

- Please attach screenshots when applicable

and similarly for what went bad.

To wrap up the study prepare draft version of a post study survey or interview It’s going to be a draft version as you will adapt it with insights coming out of participant’s diaries. For systematic assessment of first contact include standard usability measures, e.g., single ease question [18] or number of errors.

Execution

For the execution phase it’s crucial to have your participants keep a detailed description of your target developer profile in mind, minimum being age, years of experience programming (professionally), and desired programming language/ SDK/ API/ framework skills. The number of participants can vary - you can get good results with even 5 participants. For easy recruiting we chose to use TestingTime.

A big part of executing the study is reminding the participants about the tasks. You can turn this around: it’s more about seeing if they’re doing OK, if the instructions are clear, or if there’s anything else you could help them with.

During the study make sure to read the diaries as they are filled in. This is really important as sometimes you need to ask clarifying questions or ask the participants to upload screenshots to make the issue clearer. Their entries will also inform your survey/interview (e.g., why haven’t you engaged on Slack?)

Data analysis

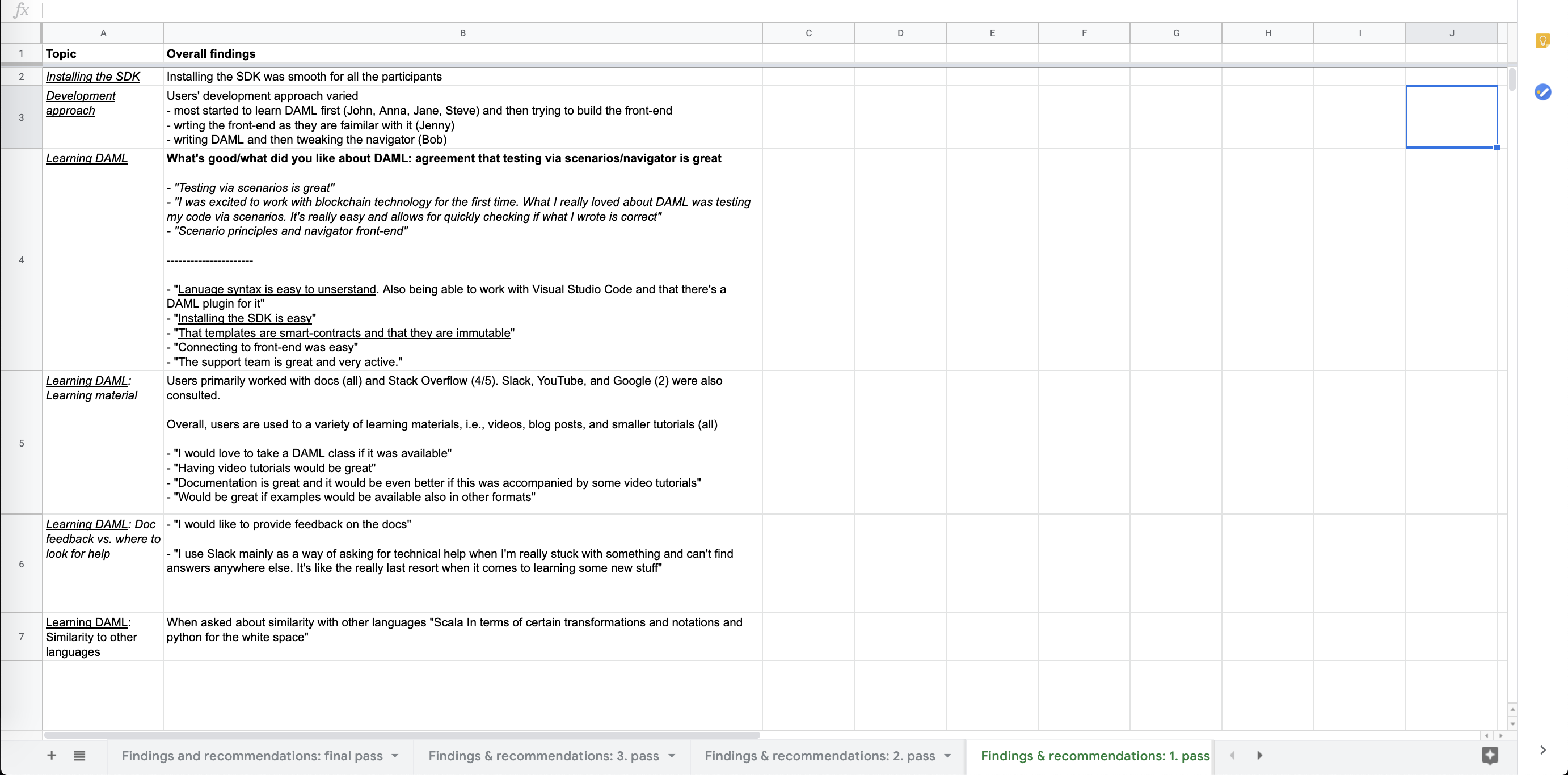

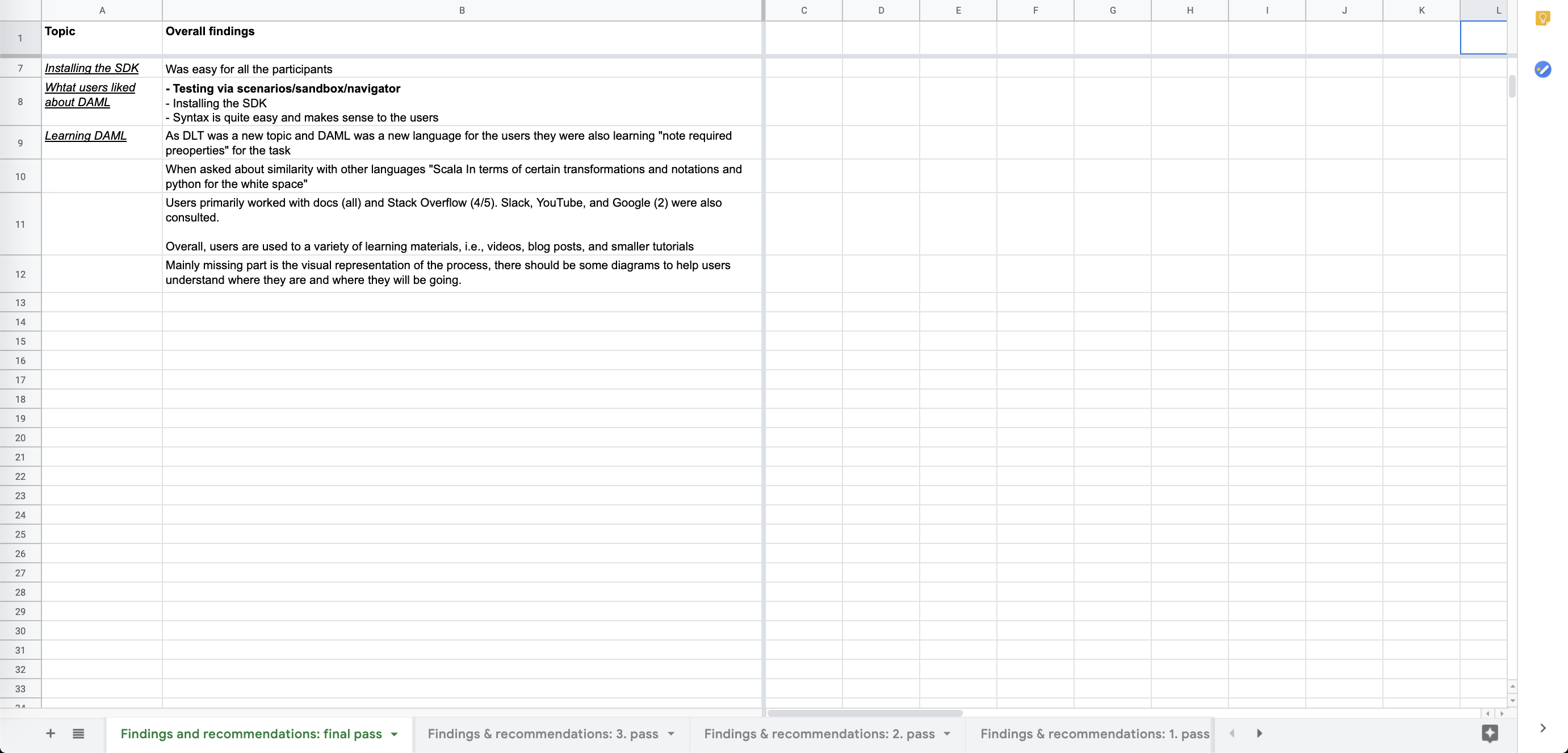

Once you have closed the study and collected the data you can start analysing it. As mentioned before there are some standard usability measures that could help (SEQ, number of errors) assess what needs to be tackled. I personally prefer to do affinity diagram analysis[17], grouping and regrouping items number of times (until it makes sense).

Now traditionally affinity diagram analysis is done with sticky notes, in a room, and a team that goes over the data. I’ve learned over the years to work with Excel/Google Sheets as it suits today’s fast paced working environment: people are busy and have limited time (even though it’s a lot more fun to do it the traditional way).

As diaries and survey entries are already in digital form you can copy them in separate sheets within the same doc. For the diary entries it may be helpful to have participant ID as rows and days as columns and freeze those for easier navigation.

Start the analysis by copying significant items to a new sheet. I typically have one row for the overarching theme and then another one for supporting items. For each new iteration just duplicate the sheet and continue the analysis.

Start the analysis by copying significant items to a new sheet. I typically have one row for the overarching theme and then another one for supporting items. For each new iteration just duplicate the sheet and continue the analysis.

Once you feel that data is in a good enough state, share it with the team/ stakeholders for their input. For me, a good enough state is when you have regrouped 3-5 times and you have enough quotes to support the categories. The last part is crucial as team members/ stakeholders benefit from reading the quotes and empathizing with what developers are going through.

As a very last step prioritise discovered issues and ideate how to fix them. And voila! That’s it. Oh, one more big and important thing: make sure to execute on fixes that you and the team deem important. You’re doing it for the devs so it has to go live ;)

Thnx to Anthony, Bernhard, and Moritz for their review and feedback on the blog post!

TL;DR: Diary studies are a great way of evaluating developer experience (DX) and developers’ first contact with your dev-related product as it’s the closest thing to learning coding as in the real world. When planning the study make sure that you have a doc that participants can refer back to with 1) study instructions and 2) detailed tasks. Provide code snippets for parts that are not important for the task and are a time-waste. When executing the study pay attention to diary entries as you might need to ask for clarifications/screenshots. Also these entries will inform your post-study survey/interview. Analyse the data with affinity diagram analysis using Excel/Google sheets.

References

[1] https://hackernoon.com/the-best-practices-for-a-great-developer-experience-dx-9036834382b0

[2] https://medium.com/@albertcavalcante/what-is-dx-developer-experience-401a0e44a9d9

[4] https://dev.to/stereobooster/developer-experience-how-i-missed-it-before-47go

[5] https://uxdesign.cc/contributing-great-developer-experience-designer-e1f497b0fb4

[6] https://www.hellosign.com/blog/the-rise-of-developer-experience

[7] https://www.aavista.com/how-to-create-a-good-developer-experience-for-your-api/

[8] https://www.moesif.com/blog/api-guide/api-developer-experience/

[10] https://blog.apimatic.io/what-exactly-is-developer-experience-1646b813df14

[11] https://uxmastery.com/resources/techniques/

[12] https://octoverse.github.com/#top-languages

[13] https://www.programmableweb.com/apis/directory

[14] https://insights.stackoverflow.com/survey/2019#overview

[15] https://www.daxx.com/blog/development-trends/number-software-developers-world

[16] https://www.testingtime.com/en/blog/diary-studies-a-practical-guide/