Secure Daml Infrastructure - Part 1 PKI and certificates

Daml is a smart contract language designed to abstract away much of the boilerplate and lower level issues allowing developers to focus on how to model and secure business workflows. Daml Ledger and a Daml Model defines how Daml workflows provide ways to control access to business data, the privacy guarantees and what actions parties can take to update the state model and move workflows forward. Separately, specific underlying persistence stores (whether DLT or traditional databases) provide various tradeoffs in terms of security guarantees, trust assumptions and operational and infrastructure complexity.

In this post we focus on lower level, infrastructure and connectivity concerns around how the processes making up the Ledger client and server components authenticate and authorise, at more coarse grain level, the connections and command submissions to a ledger. In particular, we focus on:

- Secure HTTPS connections over TLS with mutual authentication using client certificates.

- Ledger API submission authorization using HTTP security tokens defining the specific claims about who the application can act as.

This entails some specific technologies and concepts, including:

- Public Key Infrastructure (PKI), Certificate Authorities (CA) and secure TLS connections

- Authentication and Authorization Tokens, specific JSON Web Token (JWT) and JSON Web Key Sets (JWKS)

There are many other aspects for deploying and securing an application into production that we do not attempt to cover in this post. These include technologies and processes like network firewalls, network connectivity and/or exposure to the Internet, production access controls, system and OS hardening, resiliency and availability. These are among the standard security concerns for any application and are an import part of any deployment.

To demonstrate how connectivity security can be implemented and tested, we have provided a reference application - https://github.com/digital-asset/ex-secure-daml-infra - that implements a self-signed PKI CA hierarchy, and how it obtains and uses JWT tokens from an oAuth identity provider (we use Auth0 (https://auth0.com) as a reference but Okta, OneLogin, Ping or other oAuth providers would work as well) or from a local JWT signing provider. This builds on the previous post Easy authentication for your distributed app with Daml and Auth0 that focused on end-user authentication and authorisation.

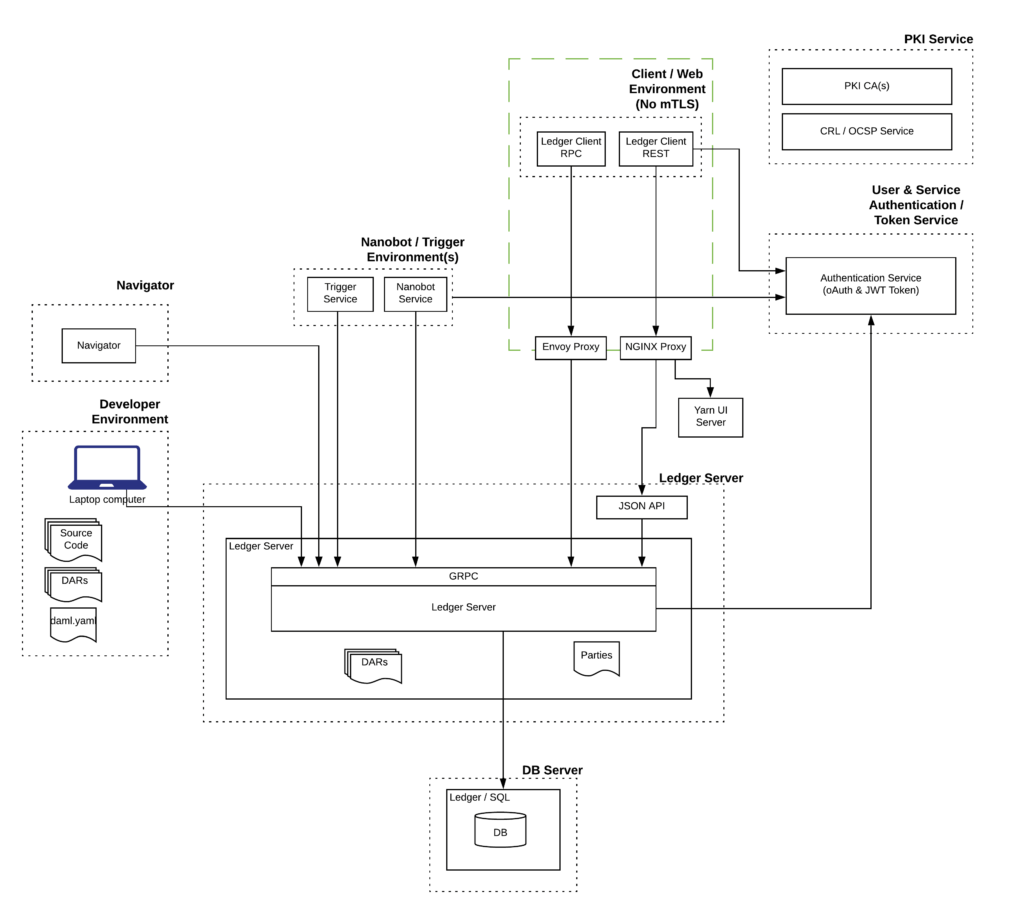

The deployed components are detailed in the following diagram:

At the center is the Ledger Server, which in this case we are using Daml Driver for PostgreSQL for persistence. A PKI service is provided to generate and issue all TLS, client and signing certificates. We connect to a token provider, either an oAuth service like Auth0 or a self-hosted JWT signing service (for more automated testing scenarios like CI/CD pipelines). Clients connecting to the Ledger include end user applications, in this case a Daml React web application, as well as automation services (in the form of Daml Triggers and/or Python automations).

We have included the JSON API service for application REST based access, which requires a front-end HTTPS reverse proxy, in this case NGINX, when operated in secure mode. We have also included an Envoy proxy for GRPC, which allows the implementation of many other security concerns (for example, DOS rate limiting protection, authentication token mapping, auditing amongst many others). In this case it is a simple forwarding proxy as these capabilities are out of scope of this article.

For completeness we also include how a developer or operator might connect securely to the Ledger for Daml DAR uploads or using Navigator. In practice, most developers develop in unauthenticated mode against locally hosted ledgers.

Secure Connections

The first step to ensure a secure ledger is to enforce secure connections between services. This is accomplished with TLS connections and mutual authentication using client certificates.

If you understand the concepts of PKI, TLS and certificates, you may choose to skip the following backgrounder.

Background

Transport Layer Security (TLS) is a security protocol to allow an application (often a web browser but also automation services) to connect to a server and negotiate a secure channel between the two of them. The protocol relies upon Public Key Infrastructure (PKI), which in turn utilises public / private keys and certificates (signed public keys) to create trust between the two sides. TLS, often seen as HTTPS (HTTP over TLS) for web browser use, is most often used to allow an application or browser user to validate they are connecting to an expected endpoint - the user can trust they are really connecting to the web site they want. However TLS also allows a mutual authentication mode, where the client also provides a client certificate, allowing both sides to authenticate each other - server now also knows which client is connecting to it.

So what are private and public keys and a “certificate”? Cryptography is used to create a pair of keys (large numbers calculated using a number of known algorithms - e.g. RSA or EC). The keys are linked via the algorithm but knowledge of one does not allow calculation of the other. One forms the “private” key - known only to the owner of the key pair and a “public” key that can be distributed to anyone else. Related cryptography algorithms can then be used to sign or encrypt data using one of the keys and the receiving party, holding the other key, can then decrypt or validate the sender or signature.

In many cases a public key (a large number) by itself is insufficient or impractical, so a mechanism is needed to link metadata or attributes about the key holder to the public key. This might include identity name and attributes, key use, key restrictions, key expiry times, etc. To do this a Certificate Authority (CA) is used to sign the metadata with the public key, resulting in a certificate. Thus anyone receiving a copy of the certificate can get the public key and data about how long this is valid for, who is the owner and any restrictions on use. Various industry standards, in particular X509, are used to structure this data. Applications are then configured to trust one or more Certificate Authorities and subsequently validate the certificates and that they are not revoked. Modern web browsers come with a default set of trusted CAs that issue public certificates for use on the Internet.

As a general best practice, Certificate Authorities are structured into a hierarchy of CAs - a root CA that forms a root of trust for all CAs and certificates issued from this service, and sub or intermediate CAs that issue actual certificates used by applications. Clients are then configured to trust this root so they can then validate any certificate they come across against this hierarchy. The Root CA is frequently set up with a long lifetime and the private keys are stored very safely offline in secure vaults. Full operation and maintenance of a production PKI involves many security procedures and practices, along with technology, to ensure the ongoing safety of the CA keys. “Signing ceremonies” involve several trusted individuals to ensure no compromise to the keys.

Testing PKI and Certificates

Note: the following code snippets come from the make-certs.sh bash script in the reference app repo. Please see the full code for all the necessary steps - we have excluded some details for brevity.

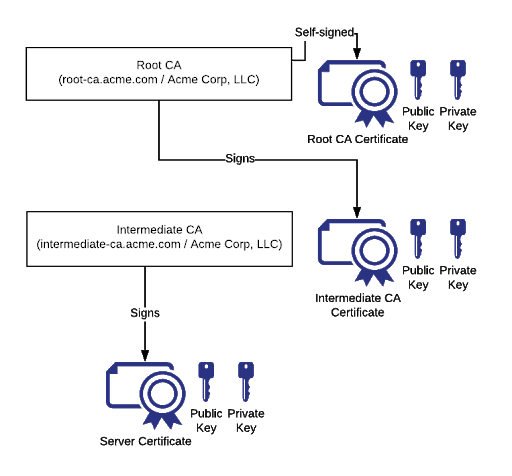

To demonstrate use of TLS and mutual authentication, we set up a demonstration PKI Certificate Authority (CA) hierarchy and issue certificates. This is diagrammed below:

The Root CA forms the root of the trust hierarchy and is created as follows:

We have created a Root CA called root-ca.acme.com (the example allows you to configure the name - default for $DOMAIN is a test domain of “acme.com” for “Acme Corp, LLC”) and an X509 name of /C=US/ST=New York/O=Acme Corp, LLC/CN=root-ca.acme.com and it has a lifetime of 7300 days or 20 years.

We won’t go into the specifics but the Root CA openssl.conf file defines extension attributes ([v3_ca] section - basic constraints - i.e. allow to be a CA and key use for signature and key signing)

Since the Root CA is often offline and securely protected, it is common for an Intermediate CA to be created to issue the actual certificates used for TLS and client authentication and code signing. There are many tradeoffs on how to structure a CA hierarchy, including separate CAs for specific uses, organisational structures, etc but this is beyond the scope of this blog.

For this reference, we create a single Intermediate CA as follows:

Here the Intermediate private key is created. We then generate a Certificate Signing Request (CSR) which is then sent to the Root CA for validation and signing and an Intermediate CA certificate is returned. In reality, the CSR is submitted to the Root CA and there would be a set of out of band security checks on the requestor before the root would sign and return a certificate. You will see this whenever you request a certificate from a public trusted CA - often Domain Verification (DV) which relies upon DNS entries or web files to verify you own the domain or server.

Similarly to the Root Certificate, attributes ([v3_intermediate_ca] in Root openssl.conf) are set on the certificate for name, constraints and key usage, lifetime (10 years) and the access points to retrieve the Certificate Revocation List (CRL) and OCSP service. These last two are used by clients to validate the current status of a certificate and whether the CA has revoked the certificate. We also set a constraint for pathlen of 0, which states that no sub-CA are allowed from this Intermediate CA, only endpoint certificates can be issued.

Now we have the CA hierarchy configured, we can issue real endpoint certificates. Let’s create a certificate for the Ledger Server:

IMPORTANT NOTE: We generated an RSA private key, with a specific difference that we created a PKCS#8 format key file using the “genpkey” option and have to specify the algorithm. We need to do this as many TLS frameworks (including that used by Ledger Server, PGDB, NGINX) will only work with PKCS#8 format key files. The difference between PKCS#8 and PKCS#1 is that the former also contains additional metadata about the key algorithm and supports more than RSA, e.g. Elliptic Curve (EC). If you look at the files the main visible difference is the first line.

PKCS#1

PKCS#8 <- Required format for TLS Frameworks

A client certificate is constructed via similar steps:

An additional attribute that we set on the server and client certificates is subjAltName (SAN) and this is generally the IP and/or DNS name of the server. Modern browsers are now enforcing this practice to tie a certificate to a specific destination DNS name, not just an X509 name.

The make-certs.sh scripts generate certificates for each endpoint, specifically PostGresQL DB, Ledger Server, web proxy, envoy proxy, auth service.

In the reference app directory this is seen as a file system hierarchy:

- Certs

- Root

- Certs

- Private

- Csr

- Intermediate

- Certs

- Private

- Csr

- Server

- Certs

- Private

- Client

- Root

Enabling Certificates for reference app services

Well done for getting this far. We now have a 2-tier CA hierarchy created and have issued TLS certificates and a client certificate. How do we enable their use within each of the services?

PostgresQL DB

We use Docker images to run PostgresDB for persistence of the state of the Ledger. If we first look at the setting required to enable TLS on PostgresDB

This tells PSQL where to find the server certificate, private key and the CA trust chain (the Root and Intermediate public keys). The remaining parameters tell PSQL to enforce TLS 1.2 and a secure set of ciphers.

The full Docker command is:

This runs a copy of PSQL 12 Docker image and maps the certificates from the local file system into the container, sets a different default password (security good practice) and exposes port 5432 for use.

Ledger Server

The Ledger Server now needs to be run with TLS enabled. This is done via:

For certificate use, the key parameters are:

- --client-auth require

- --cacrt <CA chain>

- --pem <private key file>

- --crt <public certificate>

- --sql-backend-jdbcurl

The client-auth parameter enforces the use of client mutual authentication (can also be none or optional), clients will need to present a client certificate for access. The cacrt, pem and crt parameters enable TLS and link to the CA hierarchy trust chain. The key point for sql-backend-jdbcurl is the “ssl=on” parameter which enables use of a TLS connection to the database.

Navigator, Trigger and other Daml clients

The Daml services that connect to a Ledger, including Navigator (web based console for the ledger), Trigger (Daml automation), JSON API (REST API proxy) need to be enabled for TLS and client certificate access (if required)

If we use an example of a Daml Trigger:

The important parameters are:

- tls

- pem, crt, cacrt

Parameter “tls” enables use of TLS for Ledger API connections. Parameters pem, crt point to the private and public key of the Client certificate to identify the application. Cacrt is the same as Ledger Server and points to the CA trust chain.

Other applications will have framework or language specific ways to enable TLS and client certificates.

Summary & Next Steps

So far we have described how to setup and configure a two tier PKI hierarchy and issue certificates. We’ve described how to enable services to use these certificates.

The provided scripts can be used to create and issue further certificates allowing you to try out various configurations. The scripts have been parameterised so you can change some aspects of the environment for your purposes:

- DOMAIN=<DNS Domain, default: acme.com>

- DOMAIN_NAME=<Name of company, default: "Acme Corp, LLC">

- CLIENT_CERT_AUTH=<enforce mutual authentication, TRUE|FALSE>

- LEDGER_ID=<Ledger ID, CHANGE FOR YOUR USE!, default: "2D105384-CE61-4CCC-8E0E-37248BA935A3">

Further details on steps and parameters are described in the reference documentation.

Client Authorization, JWT and JWKS

The next step is how to authorise the client's actions. This uses HTTP Security Headers and JSONWeb Tokens…… this is the topic of the next blog…….coming soon. If you are interested in Daml and Auth0, you can check the previous post here:

Easy authentication for your distributed app with Daml and Auth0